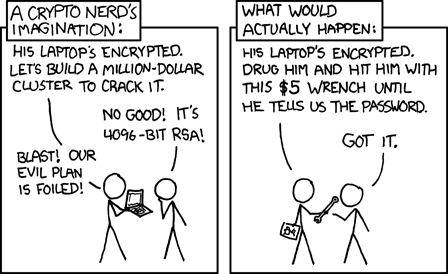

The problem with building a device that is good at keeping secrets is that it turns users into the weakest link.

From xkcd, CC-BY-NC 2.5

In practice, attackers need not go nearly as far as rubber-hose cryptanalysis to obtain passwords; a simple inspection checkpoint, verbal threat or subpoena is often sufficiently coercive.

Most security schemes facilitate the coercive processes of an attacker because they disclose metadata about the secret data, such as the name and size of encrypted files. This allows specific and enforceable demands to be made: “Give us the passwords for these three encrypted files with names A, B and C, or else…”. In other words, security often focuses on protecting the confidentiality of data, but lacks deniability.

A scheme with deniability would make even the existence of secret files difficult to prove. This makes it difficult for an attacker to formulate a coherent demand: “There’s no evidence of undisclosed data. Should we even bother to make threats?” A lack of evidence makes it more difficult to make specific and enforceable demands.

Thus, assuming the ultimate goal of security is to protect the safety of users as human beings, and not just their files, enhanced security should come hand-in-hand with enhanced plausible deniability (PD). PD arms users with a set of tools they can use to navigate the social landscape of security, by making it difficult to enumerate all the secrets potentially contained within a device, even with deep forensic analysis.

Precursor is a device we designed to keep secrets, such as passwords, wallets, authentication tokens, contacts and text messages. We also want it to offer plausible deniability in the face of an attacker that has unlimited access to a physical device, including its root keys, and a set of “broadly known to exist” passwords, such as the screen unlock password and the update signing password. We further assume that an attacker can take a full, low-level snapshot of the entire contents of the FLASH memory, including memory marked as reserved or erased. Finally, we assume that a device, in the worst case, may be subject to repeated, intrusive inspections of this nature.

We created the PDDB (Plausibly Deniable DataBase) to address this threat scenario. The PDDB aims to offer users a real option to plausibly deny the existence of secret data on a Precursor device. This option is strongest in the case of a single inspection. If a device is expected to withstand repeated inspections by the same attacker, then the user has to make a choice between performance and deniability. A “small” set of secrets (relative to the entire disk size, on Precursor that would be 8MiB out of 100MiB total size) can be deniable without a performance impact, but if larger (e.g. 80MiB out of 100MiB total size) sets of secrets must be kept, then archived data needs to be turned over frequently, to foil ciphertext comparison attacks between disk imaging events.

The API Problem

“Never roll your own crypto”, and “never roll your own filesystem”: two timeless pieces of advice worth heeding. Yet, the PDDB is both a bit of new crypto, and a lot of new filesystem (and I’m not particularly qualified to write either). So, why take on the endeavor, especially when deniability is not a new concept?

For example, one can fill a disk with random data, and then use a Veracrypt hidden volume, or LUKS with detached partition headers. So long as the entire disk is pre-filled with random data, it is difficult for an attacker to prove the existence of a hidden volume with these pre-existing technologies.

Volume-based schemes like these suffer from what I call the “API Problem”. While the volume itself may be deniable, it requires application programs to be PD-aware to avoid accidental disclosures. For example, they must be specifically coded to split user data into multiple secret volumes, and to not throw errors when the secret volumes are taken off-line. This substantially increases the risk of unintentional leakage; for example, an application that is PD-naive could very reasonably throw an error message informing the user (and thus potentially an attacker) of a supposedly non-existent volume that was taken off-line. In other words, having the filesystem itself disappear is not enough; all the user-level applications should be coded in a PD-aware fashion.

On the other hand, application developers are typically not experts in cryptography or security, and they might reasonably expect the OS to handle tricky things like PD. Thus, in order to reduce the burden on application developers, the PDDB is structured not as a traditional filesystem, but as a single database of dictionaries containing many key-value pairs.

Secrets are overlaid or removed from the database transparently, through a mechanism called “Bases” (the plural of a single “Basis”, similar to the concept of a vector basis from linear algebra). The application-facing view of data is computed as the union of the key/value pairs within the currently unlocked secret Bases. In the case that the same key exists within dictionaries with the same name in more than one Basis, by default the most recently unlocked key/value pairs take precedence over the oldest. By offering multiple views of the same dataset based on the currently unlocked set of secrets, application developers don’t have to do anything special to leverage the PD aspects of the PDDB.

The role of a Basis in PD is perhaps best demonstrated through a thought experiment centered around the implementation of a chat application. In Precursor, the oldest (first to be unlocked) Basis is always the “System” Basis, which is unlocked on boot by the user unlock password. Now let’s say the chat application has a “contact book”. Let’s suppose the contact book is implemented as a dictionary called “chat.contacts”, and it exists in the (default) System Basis. Now let’s suppose the user adds two contacts to their contact book, Alice and Bob. The contact information for both will be stored as key/value pairs within the “chat.contacts” dictionary, inside the System Basis.

Now, let’s say the user wants to add a new, secret contact for Trent. The user would first create a new Basis for Trent – let’s say it’s called “Trent’s Basis”. The chat application would store the new contact information in the same old dictionary called “chat.contacts”, but the PDDB writes the contact information to a key/value pair in a second copy of the “chat.contacts” dictionary within “Trent’s Basis”.

Once the chat with Trent is finished, the user can lock Trent’s Basis (it would also be best practice to refresh the chat application). This causes the key/value pair for Trent to disappear from the unionized view of “chat.contacts” – only Alice and Bob’s contacts remain. Significantly, the “chat.contacts” dictionary did not disappear – the application can continue to use the same dictionary, but future queries of the dictionary to generate a listing of available contacts will return only Alice and Bob’s key/value pairs; Trent’s key/value pair will remain hidden until Trent’s Basis is unlocked again.

Of course, nothing prevents a chat application from going out of its way to maintain a separate copy of the contact book, thus allowing it to leak the existence of Trent’s key/value pair after the secret Basis is closed. However, that is extra work on behalf of the application developer. The idea behind the PDDB is to make the lowest-effort method also the safest method. Keeping a local copy of the contacts directory takes effort, versus simply calling the dict_list() API on the PDDB to extract the contact list on the fly. However, the PDDB API also includes a provision for a callback, attached to every key, that can inform applications when a particular key has been subtracted from the current view of data due to a secret Basis being locked.

Contrast this to any implementation using e.g. a separate encrypted volume for PD. Every chat application author would have to include special-case code to check for the presence of the deniable volumes, and to unionize the contact book. Furthermore, the responsibility to not leak un-deniable references to deniable volumes inside the chat applications falls squarely on the shoulders of each and every application developer.

In short, by pushing the deniability problem up the filesystem stack to the application level, one greatly increases the chances of accidental disclosure of deniable data. Thus a key motivation for building the PDDB is to provide a set of abstractions that lower the effort for application developers to take advantage of PD, while reducing the surface of issues to audit for the potential leakage of deniable data.

Down the Rabbit Hole: The PDDB’s Internal Layout

Since we’re taking a clean-sheet look at building an encrypted, plausibly-deniable filesystem, I decided to start by completely abandoning any legacy notion of disks and volumes. In the case of the PDDB, the entire “disk” is memory-mapped into the virtual memory space of the processor, on 4k-page boundaries. Like the operating system Xous, the entire PDDB is coded to port seamlessly between both 32-bit and 64-bit architectures. Thus, on a 32-bit machine, the maximum size of the PDDB is limited to a couple GiB (since it has to share memory space with the OS), but on a 64-bit machine the maximum size is some millions of terabytes. While a couple GiB is small by today’s standards, it is ample for Precursor because our firmware blob-free, directly-managed, high write-lifetime FLASH memory device is only 128MiB in size.

Each Basis in the PDDB has its own private 64-bit memory space, which are multiplexed into physical memory through the page table. It is conceptually similar to the page table used to manage the main memory on the CPU: each physical page of storage maps to a page table entry by shifting its address to the right by 12 bits (the address width of a 4k page). Thus, Precursor’s roughly 100MiB of available FLASH memory maps to about 25,000 entries. These entries are stored sequentially at the beginning of the PDDB physical memory space.

Unlike a standard page table, each entry is 128 bits wide. An entry width of 128 bits allows each page table entry to be decrypted and encrypted independently with AES-256 (recall that even though the key size is 256 bits, the block size remains at 128 bits). 56 bits of the 128 bits in the page table entry are used to encode the corresponding virtual address of the entry, and the rest are used to store flags, a nonce and a checksum. When a Basis is unlocked, each of the 25,000 page table entries are scanned by decrypting them using the unlock key for candidates that have a matching checksum; all the candidates are stored in a hash table in RAM for later reference. We call them “entry candidates” because the checksum is too small to be cryptographically secure; however, the actual page data itself is protected using AES-GCM-SIV, which provides cryptographically strong authentication in addition to confidentiality.

Fortunately, our CPU, like most other modern CPUs, support accelerated AES instructions, so scanning 25k AES blocks for valid pages does not take a long time, just a couple of seconds.

To recap, every time a Basis is unlocked, the page table is scanned for entries that decrypt correctly. Thus, each Basis has its own private 64-bit memory space, multiplexed into physical memory via the page table, allowing us to have multiple, concurrent cryptographic Bases.

Since every Basis is orthogonal, every Basis can have the exact same virtual memory layout, as shown below. The layout always uses 64-bit addressing, even on 32-bit machines.

| Start Address | | |------------------------|-------------------------------------------| | 0x0000_0000_0000_0000 | Invalid -- VPAGE 0 reserved for Option | | 0x0000_0000_0000_0FE0 | Basis root page | | 0x0000_0000_00FE_0000 | Dictionary[0] | | +0 | - Dict header (127 bytes) | | +7F | - Maybe key entry (127 bytes) | | +FE | - Maybe key entry (127 bytes) | | +FD_FF02 | - Last key entry start (128k possible) | | 0x0000_0000_01FC_0000 | Dictionary[1] | | 0x0000_003F_7F02_0000 | Dictionary[16382] | | 0x0000_003F_8000_0000 | Small data pool start (~256GiB) | | | - Dict[0] pool = 16MiB (4k vpages) | | | - SmallPool[0] | | +FE0 | - SmallPool[1] | 0x0000_003F_80FE_0000 | - Dict[1] pool = 16MiB | | 0x0000_007E_FE04_0000 | - Dict[16383] pool | | 0x0000_007E_FF02_0000 | Unused | | 0x0000_007F_0000_0000 | Medium data pool start | | | - TBD | | 0x0000_FE00_0000_0000 | Large data pool start (~16mm TiB) | | | - Demand-allocated, bump-pointer | | | currently no defrag |

The zeroth page is invalid, so we can have “zero-cost” Option abstractions in Rust by using the NonZeroU64 type for Basis virtual addresses. Also note that the size of a virtual page is 0xFE0 (4064) bytes; 32 bytes of overhead on each 4096-byte physical page are consumed by a journal number plus AES-GCM-SIV’s nonce and MAC.

The first page contains the Basis Root Page, which contains the name of the Basis as well as the number of dictionaries contained within the Basis. A Basis can address up to 16,384 dictionaries, and each dictionary can address up to 131,071 keys, for a total of up to 2 billion keys. Each of these keys can map a contiguous blob of data that is limited to 32GiB in size.

The key size cap can be adjusted to a larger size by tweaking a constant in the API file, but it is a trade-off between adequate storage capacity and simplicity of implementation. 32GiB is not big enough for “web-scale” applications, but it’s large enough to hold a typical Blu-Ray movie as a single key, and certainly larger than anything that Precursor can handle in practice with its 32-bit architecture. Then again, nothing about Precursor, or its intended use cases, are web-scale. The advantage of capping key sizes at 32GiB is that we can use a simple “bump allocator” for large data: every new key reserves a fresh block of data in virtual memory space that is 32GiB in size, and deleted keys simply “leak” their discarded space. This means that the PDDB can handle a lifetime total of 200 million unique key allocations before it exhausts the bump allocator’s memory space. Given that the write-cycle lifetime of the FLASH memory itself is only 100k cycles per sector, it’s more likely that the hardware will wear out before we run out of key allocation space.

To further take pressure off the bump allocator, keys that are smaller than one page (4kiB) are merged together and stored in a dedicated “small key” pool. Each dictionary gets a private pool of up to 16MiB storage for small keys before they fall back to the “large key” pool and allocate a 32GiB chunk of space. This allows for the efficient storage of small records, such as system configuration data which can consist of hundreds of keys, each containing just a few dozen bytes of data. The “small key” pool has an allocator that will re-use pages as they become free, so there is no lifetime limit on unique allocations. Thus the 200-million unique key lifetime allocation limit only applies to keys that are larger than 4kiB. We refer readers to YiFang Wang’s master thesis for an in-depth discussion of the statistics of file size, usage and count.

Keeping Secret Bases Secret

As mentioned previously, most security protocols do a good job of protecting confidentiality, but do little to hide metadata. For example, user-based password authentication protocols can do a good job of keeping the password a secret, but the existence of a user is plain to see, since the username, salt, and password hash are recorded in an unencrypted database. Thus, most standard authentication schemes that rely on unique salts would also directly disclose the existence of secret Bases through a side channel consisting of Basis authentication metadata.

Thus, we use a slightly modified key derivation function for secret Bases. The PDDB starts with a per-device unique block of salt that is fixed and common to all Bases on that device. This salt is hashed with the user-provided name of the Basis to generate a per-Basis unique salt, which is then combined with the password using bcrypt to generate a 24-byte password hash. This password is then expanded to 32 bytes using SHA512/256 to generate the final AES-256 key that is used to unlock a Basis.

This scheme has the following important properties:

- No metadata to leak about the presence of secret Bases

- A unique salt per device

- A unique (but predictable given the per-device salt and Basis name) salt per-Basis

- No storage of the password or metadata on-device

- No compromise in the case that the root keys are disclosed

- No compromise of other secret Bases if passwords are disclosed for some of the Bases

This scheme does not offer forward secrecy in and of itself, in part because this would increase the wear-load of the FLASH memory and substantially degrade filesystem performance.

Managing Free Space

The amount of free space available on a device is a potent side channel that can disclose the existence of secret data. If a filesystem always faithfully reports the amount of free space available, an attacker can deduce the existence of secret data by simply subtracting the space used by the data decrypted using the disclosed passwords from the total space of the disk, and compare it to the reported free space. If there is less free space reported, one may conclude that additional hidden data exists.

As a result PD is a double-edged sword. Locked, secret Bases must walk and talk exactly like free space. I believe that any effective PD system is fundamentally vulnerable to a loss-of-data attack where an attacker forces the user to download a very large file, filling all the putative “free space” with garbage, resulting in the erasure of any secret Bases. Unfortunately, the best work-around I have for this is to keep backups of your archival data on another Precursor device that is kept in a secure location.

That being said, the requirement for no sidechannel through free space is at direct odds with performance and usability. In the extreme, any time a user wishes to allocate data, they must unlock all the secret Bases so that the true extent of free space can be known, and then the secret Bases are re-locked after the allocation is completed. This scheme would leak no information about the amount of data stored on the device when the Bases are locked.

Of course, this is a bad user experience and utterly unusable. The other extreme is to keep an exact list of the actual free space on the device, which would protect any secret data without having to ever unlock the secrets themselves, but would provide a clear measure of the total amount of secret data on the device.

The PDDB adopts a middle-of-the-road solution, where a small subset of the true free space available on disk is disclosed, and the rest is maybe data, or maybe free space. We call this deliberately disclosed subset of free space the “FastSpace cache”. To be effective, the FastSpace cache is always a much smaller number than the total size of the disk. In the case of Precursor, the total size of the PDDB is 100MiB, and the FastSpace cache records the location of up to 8MiB of free pages.

Thus, if an attacker examines the FastSpace cache and it contains 1MiB of free space, it does not necessarily mean that 99MiB of data must be stored on the device; it could also be that the user has written only 7MiB of data to the device, and in fact the other 93MiB are truly free space, or any number of other scenarios in between.

Of course, whenever the FastSpace cache is exhausted, the user must go through a ritual of unlocking all their secret Bases, otherwise they run the risk of losing data. For a device like Precursor, this shouldn’t happen too frequently because it’s somewhat deliberately not capable of handling rich media types such as photos and videos. We envision Precursor to be used mainly for storing passwords, authentication tokens, wallets, text chat logs and the like. It would take quite a while to fill up the 8MiB FastSpace cache with this type of data. However, if the PDDB were ported to a PC and scaled up to the size of, for example, an entire 500GiB SSD, the FastSpace cache could likewise be grown to dozens of GiB, allowing a user to accumulate a reasonable amount of rich media before having to unlock all Bases and renew their FastSpace cache.

This all works fairly well in the scenario that a Precursor must survive a single point inspection with full PD of secret Bases. In the worst case, the secrets could be deleted by an attacker who fills the free space with random data, but in no case could the attacker prove that secret data existed from forensic examination of the device alone.

This scheme starts to break down in the case that a user may be subject to multiple, repeat examinations that involve a full forensic snapshot of the disk. In this case, the attacker can develop a “diff” profile of sectors that have not changed between each inspection, and compare them against the locations of the disclosed Bases. The remedy to this would be a periodic “scrub” where entries have their AES-GCM-SIV nonce renewed. This actually happens automatically if a record is updated, but for truly read-only archive data its location and nonce would remain fixed on the disk over time. The scrub procedure has not yet been implemented in the current release of the PDDB, but it is a pending feature. The scrub could be fast if the amount of data stored is small, but a re-noncing of the entire disk (including flushing true free space with new tranches of random noise) would be the most robust strategy in the face of an attacker that is known to take repeated forensic snapshots of a user’s device.

Code Location and Wrap-Up

If you want to learn more about the details of the PDDB, I’ve embedded extensive documentation in the source code directly. The API is roughly modeled after Rust’s std::io::File model, where a .get() call is similar to the usual .open() call, and then a handle is returned which uses Read and Write traits for interaction with the key data itself. For a “live” example of its usage, users can have a look at WiFi connection manager, which saves a list of access points and their WPA keys in a dictionary: code for saving an entry, code for accessing entries.

Our CI tests contain examples of more interesting edge cases. We also have the ability to save out a PDDB image in “hosted mode” (that is, with Xous directly running on your local machine) and scrub through its entrails with a Python script that greatly aids with debugging edge cases. The debugging script is only capable of dumping and analyzing a static image of the PDDB, and is coded to “just work well enough” for that job, but is nonetheless a useful resource if you’re looking to go deeper into the rabbit hole.

Please keep in mind that the PDDB is very much in its infancy, so there are likely to be bugs and API-breaking changes down the road. There’s also a lot of work that needs to be done on the UX for the PDDB. At the moment, we only have Rust API calls, so that applications can create and use secret Bases as well as dictionaries and keys. There currently are no user-facing command line tools, or a graphical “File Explorer” style tool available, but we hope to remedy this soon. However, I’m very happy that we were able to complete the core implementation of the PDDB. I’m hopeful that out-of-the-box, application developers for Precursor will start using the PDDB for data storage, so that we can start a community dialogue on the overall design.

This work was funded in part through the NGI0 PET Fund, a fund established by NLNet with financial support from the European Commission’s Next Generation Internet Programme.

I’ve long been fascinated with deniable encryption, and mourn the loss of the rubber hose filesystem.

I am curious if one could port the key concepts onto a 32-bit microcontroller and expose attached flash as a USB block device. If would require custom code to push new bases to the run-time over USB, but could make a very nice deniably encrypted portable drive. This would remove _all_ application-awareness (as long as applications didn’t, as you pointed out, cache paths and the like).

Very cool set of concepts and constraints. While they seem solid to my amateur eyes, you should look for qualified cryptanalysis for your key derivation and encryption protocols.

Good work!

-Bob

See Oblique Drive.

This sounds like a fascinating project!

Correct me if I’m wrong, but isn’t one implication of the deniability here that there is no stored list of bases? This seems like a hugely important UX detail; if you forget what you have stored (or what you named the various bases), the periodic requirement to unlock *all* the bases at once sounds… challenging, at best.

Am I missing something, or do you have further thoughts on usage patterns to mitigate? Something like built in support for a “password manager” style basis which just records the names of all the other bases which exist. So you only have to remember the one (“my.secret.bases”), and you can have the system automatically add the basis names to my.secret.bases contents if my.secret.bases is unlocked when creating new bases?

You are correct, it is a fundamental UX challenge to remember usernames and passwords.

Your suggestion is a good one, and actually anyone is free to add a dictionary to help them remember the names of their secret Bases. However, if that dictionary was stored in a Basis that had a standardized name, attackers would know to specifically demand the password to unlock that Basis, so they can learn the full extent of other secrets in the device, thus creating a roadmap for further demands.

It’s a sort of self-circular problem — any standardized “hook” to help a user remember something is also the foundation of a demand for more information about that hook. However, if someone has come up with their own unique solution to the bootstrapping problem, they should feel empowered to use it.

The practical solution to this problem will also vary depending upon the user’s actual threat model. Users with a nominally secure location to operate out of (such as their home) can simply write down a list and keep it there, or perhaps they can use a second Precursor device that doesn’t travel to help store the references.

A simplified example of this scenario is if you want to be able to travel with a stored value wallet, but you don’t want to have to worry about it being copied if you’re forced to turn over passwords at a security checkpoint to access your destination. If you happen to forget the Basis name and/or password for the wallet while you’re travelling, you’ll eventually be able to go home and unlock it, but at least the stored value hasn’t been compromised. Unfortunately, users that are under constant threat and have no secure location to operate out of cannot use this technique.

That being said, the Basis name isn’t critical, just the password is (assuming you’re not re-using passwords across all your Bases). I personally will probably just name secret Bases according to a simple and disclosable method, because even if an attacker could guess the name of a Basis it won’t unlock or disclose anything about its existence — or non-existence — without the matching password.

Managing that password, of course, is now the problem. I don’t think there is any shortcut around that. This isn’t necessarily a feature, but I would observe that being prompted on a semi-weekly basis to enter credentials is probably a decent way to reinforce them in your memory.

Fantastic post! A possible problem: if you’re unlocking a basis by simple trial decryption using AES-GCM-SIV, you can find a weird edge case that breaks the consistency of your storage. AES-GCM-SIV is not “key-comitting” which means that someone can construct ciphertexts which decrypt to two different values under two different keys.

https://keymaterial.net/2020/09/07/invisible-salamanders-in-aes-gcm-siv/

It’s probably not a security issue but it also is probably not a desirable feature for PDDB. One mitigation is to simply use a key-committing AEAD. Unfortunately there aren’t any standardized solutions. Simplest way is just encrypt-then-HMAC. Here’s some more info. Feel free to email me if you wanna chat about it.

https://datatracker.ietf.org/meeting/110/materials/slides-110-cfrg-caead-00

https://github.com/BLAKE3-team/BLAKE3/issues/138

https://eprint.iacr.org/2020/1153.pdf

Thank you very much for this reply! This is exactly the sort of feedback I’m looking for.

I’ll post some initial thoughts here so others following along can see where this is headed, but I’ll also email you in case you prefer to take the conversation offline.

I’m trying to understand the nature of the Salamanders in AES-GCM-SIV based on your paper, but this is what I can glean so far:

– The plaintext needs to be in a format where something like a comment field can be brute-forced into the result early on by guessing ciphertexts.

– In other words, while one can construct two keys which can pass the “auth” test of AES-GCM-SIV, one can perhaps manipulate the first few bytes in the message to be a specific value?

– The tail end of the post has the statement “This means, in almost all cases, we can construct an invisible salamander with only adding two sacrificial blocks, with the same caveat that the two plaintexts need to be partially brute forced.” Does this imply that if the length of the ciphertext is fixed, one cannot use this method to construct a salamander?

In the PDDB, AES-GCM-SIV is used with the following properties:

– Block size is constant and fixed. The ciphertext, including nonce and MAC, always results in a 4096-byte block. There is no scenario where a length extension is allowed, when data cannot fit in one block it is split, and another full 4096-byte block is allocated.

– A trial key has to pass several tests to be validated:

(1) It has to pass decryption of a 128-bit page descriptor that uses AES-ECB with a short nonce and checksum on the inside. This is assumed to be trivial to fake.

(2) The same key that passes (1) now must also decrypt the target, 4k-sized AES-GCM-SIV blocks correctly. Each block has a separate nonce and MAC.

(3) At the very least for a Basis to be thought as valid, it must decrypt one AES-GCM-SIV block that contains the root Basis record. The top of this record consists of a 4-byte journal number, a 4-byte magic number, and a 4-byte revision number, then the name of the Basis. The journal number can be “anything”, so the attacker would need to brute-force a ciphertext that can create an 8-byte sequence exactly. That’s probably not iron-clad, but I’m guessing it’s also not trivial? However, assuming they can succeed with that, the name of the Basis would be garbage, and it would not equal the name of the Basis the user requested, and would thus still be rejected…

– If the key can’t successfully decrypt the root record, the Basis is effectively non-existent and no other blocks are attempted.

– If an attacker wanted to inject a malicious block somewhere inside the database, they would then need to:

(1) Cause an AES-ECB collision on the page table (probably trivial)

(2) Craft a salamander with AES-GCM-SIV on the Basis Root record that does not extend the length of the block of data, contains the correct magic and version numbers, and matching Basis name.

(3) Craft a second salamander with the same key that can fake a directory entry for the target data

(4) Craft a third salamander with the same key that encodes the target data they want to fool the user into accepting.

This seems like it might not be likely given that you have to link the results of several blocks together, but I’m not sure. I’d love to hear your thoughts about the feasibility of the same key being used on several different blocks all requiring some amount of matching plaintext in order for the system to be foiled.

The more likely attack then is that maybe an attacker can use a salamander to trick the system into trying to load a malicious Basis descriptor that contains for example a string length field that is incorrect, causing data to be copied to a buffer that overflows its bounds, thus leading to code execution.

That being said, Rust does help with avoiding that because all its arrays are bounds-checked and we don’t use `unsafe` to simply map disk data into RAM, all strings are de-serialized “the slow way” using safe code only. I just took a look at the code for deserializing the root block and I don’t see any obvious paths for code injection even if an attacker can fully control the plaintext within the salamander, and same for the dictionary and key descriptors.

Anyways, thanks for the feedback, I’ll also ping you by email in case you prefer to respond off-line!

Another comment mentioned the non-desireability of common hooks into the bases. I think it’s a very tough UX problem either way, but even moreso if there isn’t a common basis. It might be interesting to consider bases that can be explicitly setup with false-keys; giving the attacker the false-password will “successfully” decrypt the basis with the false-plaintext.

Could something like this work with SQLite? It is, after all, the de-facto standard for mobile application persistent data storage in billions of devices.

Each basis would be a separate database that is connected with ATTACH DATABASE. Tables of the same name in different bases would be merged with UNION into a single top level virtual table using a temporary VIEW. Writing to this virtual table can be performed with INSTEAD OF triggers that send the modification to the topmost basis.

This would obviously not be compatible with things like UNIQUE or FOREIGN KEY constraints but otherwise most standard SQL should work.

I think the idea of “Bases” behaving as free space in PDDB is brilliant, the trade-off of remembering the names is fine (worst case, there is always pen and paper stored securely).

BTW:

Is there any hardware design mitigation against power analysis (CPA, DPA, etc.) and EM fault injection in Precursor? I checked the VexRiscv SpinalHDL source code and noticed that the AES plugin (AesPlugin.scala) uses conventional SBOX tables and mixing rounds, but wasn’t sure if that part has been customized in the Precursor code. Also curious if the CPU state machine does anything to protect against EM fault injection.

The hardware isn’t specifically hardened against CPA, DPA or EM fault injection. The SHA block comes from OpenTitan and it is supposedly hardened against that, and the Curve25519 stuff advertises that it’s constant-time. The AES block inside the CPU hasn’t been audited against such attacks, but it hasn’t been a big focus, mainly because the WBSTAR exploit is so powerful. You don’t need to go that far to blow the hardware security up: see https://www.bunniestudios.com/blog/?p=5979 for an in-depth discussion of that issue.

The TL;DR is that if you really need to protect something against an attacker who will gain unlimited and potentially destructive physical access to the device, the best answer I have is to use battery-backed root keys and pot the device in glue: there is a high chance the secrets will be erased before they are extracted. But so long as the design is in a Spartan-7 FPGA, it’s not going to be as strong against physical extraction attempts as many hardened IC designs are. That being said, one of the assumptions of the PDDB is that the attacker can have the root key (e.g. they were successful in the WBSTAR root key extraction attempt) and even with that they cannot prove or disprove the existence of any additional secret data.

Also, the device does come with a metal can to shield out the FPGA, so the CPU is basically in a Faraday cage once it is sealed. EMC testing shows that it’s pretty darn quiet, so it should do alright against practical arms-length RF attacks, both in terms of side channels and fault injection. But if you’re able to solder to the board and glitch the power supply, you can probably wreck all the havoc (but anyways you should just do a WBSTAR key extraction).

Thanks for the detailed explanation. I’m starting to understand where the PDDB requirements originated from.

After giving it some thought, being completely transparent about the known weaknesses in the components used as you have is better in the long run compared to picking a new “unknown” part which is only secure in the sense no one has researched it properly yet. I like this approach, the information makes it possible to design apps for Xous to improve the security as well.

I can’t wait to get started with development of some app ideas, got Rust in Linux, will get Xous next. Does the emulation of Precursor (mentioned here: https://github.com/betrusted-io/betrusted-wiki/wiki#hello-world) work stand-alone or do you need the actual hardware to test the compiled programs?

The “hosted mode” emulation should work stand-alone out of the box — you should be able to run `cargo xtask run` and it will “just work”.

Important caveats are that “hosted mode” is mainly for UI/UX development — most of the hardware and underlying crypto primitives are not actually present, there are just stubs that report fake values to keep the UX sane.

There’s an internal flag you can flip to auto-initialize the PDDB in hosted mode, but what will happen is on every boot the PDDB is formatted as if the disk is blank using some sham default passwords (and all-0 AES keys). We don’t currently have a way to specify a persistence file for the PDDB between runs in hosted mode, but if someone needed it it wouldn’t be terribly hard. This is because we also have a mode where Xous automatically runs a battery of CI tests against the PDDB, and does incremental snapshots of the ciphertext and keys for off-line analysis of correctness. Wedging in a retrofit to make that go the other way (load in the snapshot instead of writing it out) would probably be an afternoon project for me.

Also note that if you’re looking to do development on the kernel or hardware drivers, we also have a register-accurate simulation that uses Renode. This /also/ doesn’t emulate PDDB persistence between runs, because we mainly use it to debug hairy kernel and loader faults, which is a layer lower than the PDDB.

The distinction is that in “hosted mode” Xous actually runs natively on your host machine — it’s actually using your OS’s native threads and 64-bit pointers to emulate the kernel system calls, running x86 instructions. In Renode, Xous runs on an instruction-accurate RV32-IMAC emulator, so it’s using 32-bit pointers and running the actual instructions that would run on real hardware, making it much better for debugging things like concurrency bugs or failed syscalls.

Regarding the meme above. There are a lot of cryptographic systems with self self destruct keys. Encrypted hard drives for example. If your ballsy enough you could give the destruction key in interrogation which would were the drive when they entered it. Now depending on the situation that may be a death sentence cause you’d no longer be useful, but neither would the drive cause the data would have formatted.

A self destructing drive isn’t useful against an adversary who already has an image of the drive. Potentially a fun feature though against less advanced adversaries :)

I didn’t read the blog nor know it’s context. I’m getting ready for work. This is just regarding the meme. If I recall when I’m off I’ll read it than.

Feels like an adversary that is happy to use a $5 wrench, would be fairly happy enforcing a rule the all devices going past a checkpoint must fill their empty space.

Replicate the image, and don’t send the replica past the checkpoint.

What if at first-boot you allocate some number of Bases and throw away the keys, leaving them indistinguishable from actual decryptable user-created ones? Plausibly those are not decryptable by me.

Then instead of risking data loss, you could have a list of concrete list of free blocks, but that list would be kind of useless because there’s no way to prove what’s what in it.

Your approach is stronger, but also seems harder to manage.

On another note, is the number of write cycles endured by a region of a flash chip something that can be measured by an attacker? (Maybe response time?) That might leak what areas were containing user data versus thrown-away random data. (Applies to both schemes)

In brief: There is no such thing as plausible deniability. US CBP (by direct knowledge), and by extension any other US government agency that’ll have an interest in this sort of stuff, knows about and keeps up to date with whatever’s out there. If you use anything with “plausible deniability” they’ll just keep asking for further access until they get all the way down.

They’ve very, very good at getting people to cooperate. I’ve seen them get through other “plausible deniability” systems.

And that’s nothing compared to organizations who don’t have to worry about legal niceties.

[…] Huang has created a Plausibly Deniable […]

[…] Huang has created a Plausibly Deniable […]

The term the cartoon is looking for is “rubber hose cryptography”. You use the rubber hose so it won’t leave marks.

Hi,

Echoing previous comments — the technical part of the PD solution here looks brilliant to my layman’s eyes; but I do think there is a problem: if the opponent has certain knowledge you have something to hide, such as billions of crpyto, aren’t we simply adding one more iteration of the $5 wrench xkcd example? That is, I know you have a bitcoin wallet and passphrase, where is it …

Plausible deniability status with the assumption that knowledge that you have something is unknown and you’d like to keep the adversary from discovering that knowledge. By definition, if it’s known you have such a Bitcoin wallet, you cannot deny it.

There are other plausible denials you can make, such as “I can’t remember the password” or “I have no idea what the password is, it’s kept by [some inaccessible third party]” or other claims about inaccessibility of the keys/cyphertext. These claims being true is also a potential tactic, depending on the circumstances.

Of course, what some coercive adversary will believe can be difficult to predict and they could plausibly disbelieve even very true denials firmly enough to make your life *very* unpleasant in the time it takes them to decide they can’t “persuade” you to recant…

As with pretty much any security measure, it’s about cost/benefit calculations. There’s a cost to coercion (be it public relations, politics, finances, conscience, time…) and at some point, the value you place on keeping your secrets, the plausibility of your denials, and the costs of applying coercion add up to more than the value they place on getting at the information they seem and the likelihood they estimate of getting them (whether they think you’re not going to crack or they think your denials are plausible, or whatever combination of the two).

The more you can bolster the plausibility of your denials (e.g. by removing means to *disprove* them) the less you need cast iron will to resist coercion do tip that balance on your favour.

The cost of inconvenience through locking your secrets up in secure systems that are harder to manage needs to be weighed up against your threat model which will include how you expect a potential adversary to see the cost benefit analysis from their side, of course, so you’ll come to a different conclusion depending on the nature of your secrets and adversaries. The CIA are no angels, but if your risk coming into the hands of some suitably motivated psychopathic warlord in some lawless backwater, you might want to plan for the possibility that keeping you in a dungeon for decades is a small cost to them…

LEO:

You have a precursor and we know it can provide plausable deniability, now tell us your hidden secrets or we hit you with a $5 wrench.

Citizen that isn’t using the plausable deniability feature on his precursor:

Shit…

Unfortunately, there are at least three major issues with the whole hardware plausible deniability concept.

One, it is workable only for more or less “local” use where any checks would be either covert or unexpected. For border control and similar, as someone already mentioned, you will most likely be required to wipe/scramble all undeclared space, making putting any data into PD storage useless.

But even that hinges on widespread use of such devices, otherwise, simply having one makes any (even true) denial implausible instead.

And the thrird point is… Whatever the hardware, the human using it will just be hooked up to a polygraph, and then all the plausibility of these denials goes outta the window.

[…] Huang has created a Plausibly Deniable […]

[…] graphics abstractions for modals and multi-lingual text, storage (in the form of an encrypted, plausibly deniable database called the PDDB), trusted boot, and a key management library with self-provisioning and sealing […]

[…] I described the Plausibly Deniable DataBase (PDDB). It’s a filesystem (like FAT or ext4), combined with plausibly deniable full disk encryption […]